“Everything we love about civilization is a product of intelligence, so amplifying our human intelligence with artificial intelligence has the potential of helping civilization flourish like never before – as long as we manage to keep the technology beneficial.“

Max Tegmark, President of the Future of Life Institute

What Is AI?

Artificial intelligence (AI) is rapidly progressing, from SIRI to self-driving cars. While science fiction frequently depicts AI as humanoid robots, AI can refer to anything from Google’s search algorithms to IBM’s Watson to autonomous weapons.

Artificial intelligence is now properly known as narrow AI (or weak AI) because it is designed to perform a specific task (e.g. only facial recognition or only internet searches or only driving a car). However, many researchers’ long-term goal is to develop general AI (AGI or strong AI). While narrow AI may outperform humans at a specific task, such as chess or problem solving, AGI would outperform humans at nearly every cognitive task.

Why Research AI Safety?

In the short term, the goal of limiting AI’s negative impact on society motivates research in a wide range of fields, from economics and law to technical topics like verification, validity, security, and control. Whereas a laptop crash or being hacked may be a minor annoyance, it becomes even more critical that an AI system does what you want it to do if it controls your car, airplane, pacemaker, automated trading system, or power grid. Another immediate challenge is avoiding a devastating arms race in lethal autonomous weapons.

In the long run, an important question is what will happen if the quest for strong AI is successful and an AI system outperforms humans in all cognitive tasks. As I.J. pointed out, Good in 1965, designing smarter AI systems is a cognitive task in and of itself. Such a system could potentially undergo recursive self-improvement, resulting in an intelligence explosion that would far outpace human intellect. By inventing revolutionary new technologies, such a superintelligence could aid in the abolition of war, disease, and poverty, making the development of strong AI the most significant event in human history. However, some experts are concerned that it may also be the last, unless we learn to align the AI’s goals with ours before it becomes superintelligent.

Some argue that strong AI will never be achieved, while others argue that the development of superintelligent AI will always be beneficial. At FLI, we recognize both of these possibilities, but we also recognize the potential for an artificial intelligence system to cause significant harm, either intentionally or unintentionally. We believe that research conducted today will assist us in better preparing for and preventing such potentially negative consequences in the future, allowing us to reap the benefits of AI while avoiding the pitfalls.

How Can AI Be Dangerous?

Most researchers agree that a superintelligent AI is unlikely to experience human emotions such as love or hatred, and that there is no reason to expect AI to become intentionally benevolent or malevolent. Instead, when it comes to how AI could become a risk, experts believe two scenarios are most likely:

- The AI is programmed to do something heinous: Autonomous weapons are artificial intelligence systems designed to kill. These weapons have the potential to cause widespread devastation if they fall into the hands of the wrong person. Furthermore, an AI arms race could inadvertently lead to an AI war with mass casualties. To avoid being thwarted by the enemy, these weapons would be designed to be extremely difficult to simply “turn off,” allowing humans to lose control of such a situation. This risk exists even with narrow AI, but it grows as AI intelligence and autonomy increase.

- The AI is programmed to do something good, but it devises a destructive method of doing so: This can occur when we fail to fully align the AI’s goals with ours, which is exceedingly difficult. If you ask an obedient intelligent car to take you to the airport as quickly as possible, it might get you there chased by helicopters and covered in vomit, doing exactly what you asked for. If a superintelligent system is tasked with a large-scale geoengineering project, it may wreak havoc on our ecosystem as a byproduct, and view human attempts to stop it as a threat that must be met.

This technology is already available – and it poses significant risks. Learn more about lethal autonomous weapons and what we can do to prevent them by visiting this website.

As these examples show, the main concern about advanced AI is not malice but competence. A super-intelligent AI will be extremely good at achieving its goals, and if those goals do not coincide with ours, we will have a problem. You’re probably not an evil ant-hater who steps on ants maliciously, but if you’re in charge of a hydroelectric green energy project and there’s an anthill in the area to be flooded, the ants will suffer. One of the primary goals of AI safety research is to never put humans in the position of those ants.

Why The Recent Interest In AI Safety

Stephen Hawking, Elon Musk, Steve Wozniak, Bill Gates, and many other big names in science and technology have recently expressed concern about the risks posed by AI in the media and through open letters, and they have been joined by many leading AI researchers. Why is the topic suddenly making headlines?

The idea that the pursuit of strong AI would eventually succeed was long thought to be science fiction, centuries or more in the future. However, thanks to recent advances, many AI milestones that experts thought were decades away only five years ago have now been reached, prompting many experts to consider the possibility of superintelligence in our lifetime. While some experts believe that human-level AI is still centuries away, the majority of AI researchers at the 2015 Puerto Rico Conference predicted that it would occur before 2060. Because the necessary safety research could take decades, it is prudent to begin it now.

We have no way of predicting how AI will behave because it has the potential to become more intelligent than any human. We can’t use previous technological developments as much as we used to because we’ve never created anything that can outsmart us, wittingly or unwittingly. The best example of what we could face may be our own evolution. People now rule the world, not because we are the strongest, fastest, or largest, but because we are the smartest. If we’re no longer the smartest, are we assured to remain in control?

According to FLI, our civilization will thrive as long as we win the race between the increasing power of technology and the wisdom with which we manage it. In the case of AI technology, FLI believes that the best way to win is to support AI safety research rather than impede it.

The Top Myths About Advanced AI

A fascinating discussion is currently taking place about the future of artificial intelligence and what it will/should mean for humanity. There are fascinating controversies where the world’s leading experts disagree, such as: AI’s future impact on the job market; if/when human-level AI will be developed; whether this will result in an intelligence explosion; and whether we should welcome or fear this. However, there are numerous examples of boring pseudo-controversies caused by people misinterpreting and talking over each other. To help us focus on the interesting debates and open questions, rather than the misunderstandings, let’s dispel some of the most common myths.

Timeline Myths

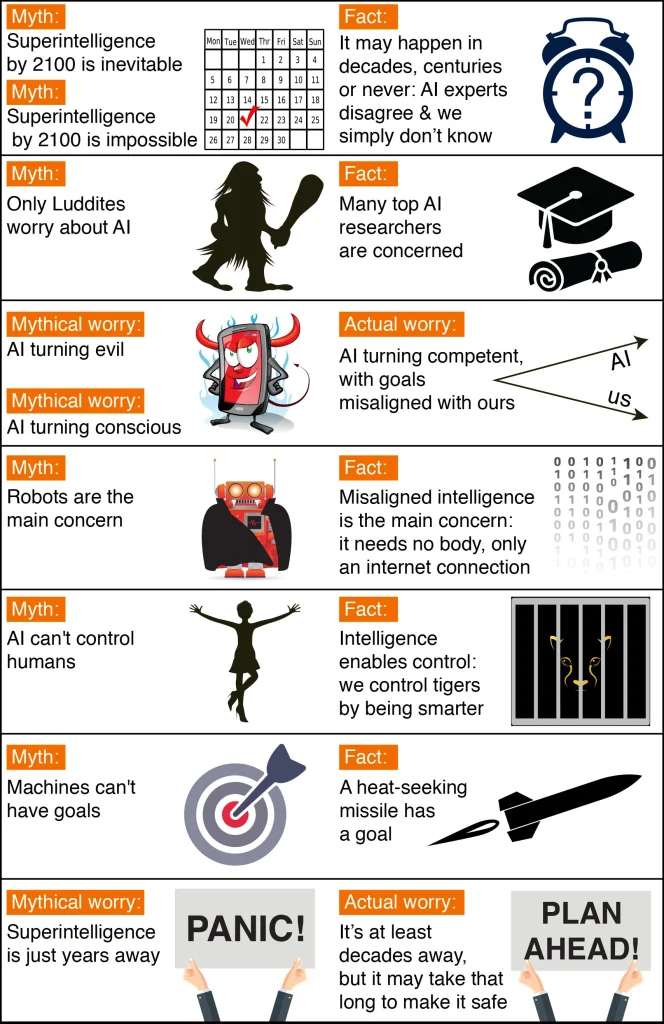

The first myth concerns the timeline: how long will it take for machines to vastly outperform human intelligence? A common misunderstanding is that we know the answer with absolute certainty.

One common misconception is that we will have superhuman AI by the end of the century. In fact, history is littered with examples of technological over-hype. Where are the fusion power plants and flying cars that we were promised by now? AI has also been over-hyped in the past, even by some of the field’s founders. For example, John McCarthy (who coined the term “artificial intelligence”), Marvin Minsky, Nathaniel Rochester, and Claude Shannon made the following overly optimistic prediction about what could be accomplished in two months with stone-age computers:“We propose that a 2 month, 10 man study of artificial intelligence be carried out during the summer of 1956 at Dartmouth College An attempt will be made to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves. We think that a significant advance can be made in one or more of these problems if a carefully selected group of scientists work on it together for a summer.”

On the other hand, a popular counter-myth holds that we already know we won’t be able to create superhuman AI this century. Researchers have made a variety of estimates for how far we are from superhuman AI, but given the dismal track record of such techno-skeptic predictions, we can’t say with certainty that the probability is zero this century. For example, in 1933, less than 24 hours before Szilard’s invention of the nuclear chain reaction, Ernest Rutherford, arguably the greatest nuclear physicist of his time, declared that nuclear energy was “moonshine.” In 1956, Astronomer Royal Richard Woolley described interplanetary travel as “utter bilge.” The most extreme version of this myth holds that superhuman AI will never be realized because it is physically impossible. However, physicists know that a brain is made up of quarks and electrons arranged to function as a powerful computer, and that no physical law prevents us from creating even more intelligent quark blobs.

A number of surveys have been conducted to ask AI researchers how many years they believe we will have human-level AI with a 50 percent chance in the future. All of these polls come to the same conclusion: the world’s top experts disagree, so we simply don’t know. In a poll of AI researchers at the 2015 Puerto Rico AI conference, for example, the average (median) answer was by 2045, but some researchers guessed hundreds of years or more.

There is also a related myth that people who are concerned about AI believe it is only a few years away. In fact, most people who have expressed concern about superhuman AI believe it is still decades away. They argue, however, that as long as we aren’t certain it won’t happen this century, it’s prudent to begin safety research now to prepare for the possibility. Many of the safety issues associated with human-level AI are so difficult to solve that they may take decades to resolve. So it’s better to start looking into them now rather than the night before some Red Bull-drinking programmers decide to turn one on.

Controversy Myths

Another common misconception is that the only people who are concerned about AI and advocating for AI safety research are luddites who don’t understand the technology. The audience laughed loudly when Stuart Russell, author of the standard AI textbook, mentioned this during his Puerto Rico talk. Another common misconception is that funding AI safety research is extremely contentious. People don’t need to be convinced that risks are high, just non-negligible, to support a modest investment in AI safety research, just as a modest investment in home insurance is justified by a non-negligible probability of the house burning down.

It may be that media have made the AI safety debate seem more controversial than it really is. After all, fear sells, and articles that use out-of-context quotes to proclaim impending doom are more likely to generate clicks than nuanced and balanced ones. As a result, two people who only know about each other’s positions from media quotes are more likely to believe they disagree than they do. A techno-skeptic, for example, who only read about Bill Gates’ position in a British tabloid may mistakenly believe Gates believes superintelligence is imminent. Similarly, someone in the beneficial-AI movement who knows nothing about Andrew Ng’s position other than his quote about overpopulation on Mars may mistakenly believe he is unconcerned about AI safety, when in fact he is. The problem is that, because Ng’s timeline estimates are longer, he naturally prioritizes short-term AI challenges over long-term ones.

Myths About The Risks Of Superhuman AI

Many AI researchers scoff when they see this headline: “Stephen Hawking warns that rise of robots may be disastrous for mankind.” Many people have lost track of how many similar articles they’ve read. These articles are typically accompanied by an evil-looking robot wielding a weapon, and they suggest that we should be concerned about robots rising up and killing us because they have become conscious and/or evil. On a lighter note, such articles are actually quite impressive because they concisely summarize the scenario that AI researchers are unconcerned about. That scenario combines three distinct misconceptions: concern about consciousness, evil, and robots.

You have a subjective experience of colors, sounds, and so on as you drive down the road. Is there a subjective experience in a self-driving car? Is it possible to imagine yourself as a self-driving car? Although the mystery of consciousness is intriguing in its own right, it is unrelated to the risk of AI. If you are hit by a driverless car, it makes no difference whether it is conscious or not. Similarly, what superintelligent AI does, not how it subjectively feels, will have an impact on us humans.

Another red herring is the fear of machines turning evil. The real concern is not malice, but competence. Because a superintelligent AI is by definition very good at achieving its goals, whatever they may be, we must ensure that its goals are aligned with ours. Humans do not generally dislike ants, but we are more intelligent than they are, so if we want to build a hydroelectric dam and there is an anthill nearby, the ants will suffer. The beneficial-AI movement seeks to keep humanity from falling into the same trap as the ants.

The misconception about consciousness is related to the myth that machines cannot have goals. Machines can clearly have goals in the narrow sense of exhibiting goal-oriented behavior: the behavior of a heat-seeking missile is best explained economically as a goal to hit a target. If you are threatened by a machine whose goals are at odds with yours, it is the machine’s goals in this narrow sense that concern you, not whether the machine is conscious and has a sense of purpose. If a heat-seeking missile was chasing you, you wouldn’t say, “I’m not worried, because machines can’t have goals!”

I sympathize with Rodney Brooks and other robotics pioneers who believe they have been unfairly demonized by fearmongering tabloids, because some journalists appear to be obsessed with robots and adorn many of their articles with evil-looking metal monsters with red shiny eyes. In fact, the beneficial-AI movement’s primary concern is not with robots, but with intelligence itself: specifically, intelligence whose goals are misaligned with ours. Such misaligned superhuman intelligence requires no robotic body to cause us trouble, only an internet connection – this may enable outsmarting financial markets, out-inventing human researchers, out-manipulating human leaders, and developing weapons we don’t even understand. Even if it were physically impossible to build robots, a super-intelligent and super-wealthy AI could easily pay or manipulate many humans to unwittingly do its bidding.

The myth about robots is related to the myth about machines not being able to control humans. Control is enabled by intelligence: humans control tigers not because we are stronger, but because we are smarter. This means that if we cede our position as the smartest people on the planet, we may also cede control.

The Interesting Controversies

Not wasting time on the aforementioned myths allows us to focus on true and interesting controversies on which even experts disagree. What kind of future do you want for yourself? Should we develop lethal self-driving weapons? What do you want to see happen with job automation? What career advice do you have for today’s youth? Do you prefer new jobs to replace old ones, or do you prefer a jobless society where everyone lives a life of leisure and machine-produced wealth? Would you like us to create superintelligent life and spread it throughout our universe in the future? Will we be able to control intelligent machines, or will they be able to control us? Will intelligent machines be able to replace, coexist with, or merge with us? What does being human mean in the age of artificial intelligence? What do you want it to mean, and how can we make that happen in the future? Please join the discussion!

Learn more from Artificial Intelligence and read AI vs. Coronavirus: 6 Ways it Helps Healthcare.

3 Comments